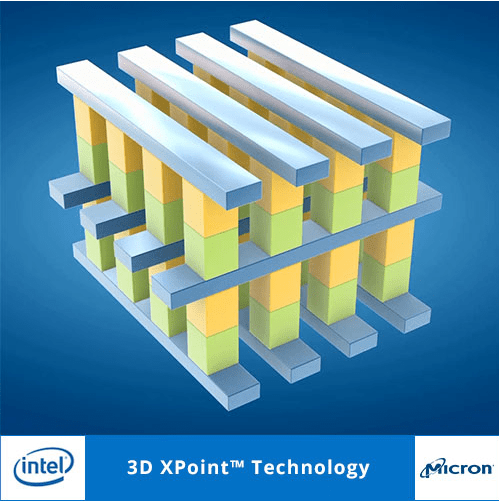

Intel and Micron, in July 2015, announced a revolutionary memory technology, called 3D XPoint [Pronounced CrossPoint, XPoint from now on here.] and they have recently made further announcements regarding the first introduction of products employing this technology.

I’ve seen discussions that talk about how XPoint is going to “Revolutionize” our thinking about computing. I’m thinking, er…. No. It will make things better and faster, but I don’t see a “Revolution”. I’m not suggesting that I’m pessimistic about XPoint. I’m suggesting that XPoint does not really create any new ways to Organize, Share or Distribute data, so what I think we are going to see is an Evolution where XPoint is used with methods we already have to Organize, Share and Distribute data.

Data Organization Methods

There are three major ways we organize data in and between computers. I’m sure you know about these, but let’s review.

Heaps, File Systems, and Databases.

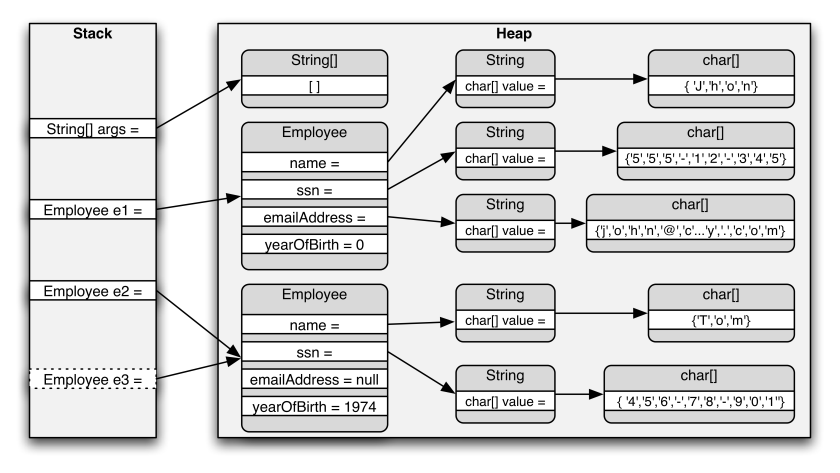

Heaps are “anonymous” collections of data which are only available by the address originally obtained when the heap fragment was allocated. There is no naming, no attributes, and no means of dealing with fragmentation. The application that uses the heap needs to do these vital functions. The properties of anonymity makes sharing difficult or impossible between “Processes” since the “name” of a heap element is the address in a particular process address space. Security is typically not managed for heap objects. You have the address implies you have access. One can argue about kernel vs user heap, etc. but in general items are only shared toward the inner security ring.

File Systems are designed to keep track of data chunks which vary widely in Size, Lifetime and Access patterns. Fragmentation is managed, and in some cases aggressively mitigated. There is a naming structure which is crucial for access and sharing. Security of access is a big deal, so reaching around the file system to get the bytes is a big no-no. Files have only a few attributes and as far as the file system is concerned, the file content is un-interpreted data. Sharing is a big deal. Between processes, between machines across networks of any size and scope.

Another reminder. File System APIs treat the file as a stream of bytes, but the files are managed as fixed size blocks by the library and lower layers of software all the way down to the hardware. This is the modern way. Back in the OS/360 days in the 1960s each file was defined completely as a stream of records according to the application requirements and this added needless complexity to every application which had to carry these definitions to read the simplest file. The definitions for every file was part of the JCL or Job Control Language program that accompanied the program that was being run. JCL programs size often exceeded the size of the program itself. Input files often had card-reader sized records of 80 characters; output files typically used line printer sized records of 133 characters, and these size records were written directly to the disks or other magnetic media. Just as they had been written to tape in the previous generation. We no longer use those methods. The file system abstracts the data byte stream of variable size records and record terminators to fixed size blocks and manages fragmentation, integrity and a host of worries for us.

Databases are designed to keep track of typically small data chunks that are related in an application specific way with other data chunks. Fragmentation is aggressively managed and there is a large and complex set of names and indices that allow application access to the data chunks. As for file systems, security and sharing are big deals. Integrity is a really big deal. Transactions, logs, rollback and other features abound. SQL databases are so popular and high performance that often very unlikely applications, such as the data for Massively Multiplayer Online Role Playing Games [MMOs] are stored in SQL databases, when at first look at the problem, one might guess that something else was called for. Databases are widely shared.

Obvious Examples

So we have websites that are current popular means of accessing large datasets. No websites that I’m aware of, expose Heaps to the world.

Youtube is an example of File Sharing, with a database holding the many additional attributes that a normal file system does not carry. But clearly a file system holds the videos.

Amazon shopping service is an example of a pure database application. The goods are objects held in warehouses rather than in the digital domain, although the 3D printer folks would like to change that too.

Whither 3D XPoint?

Folks have waxed hyperbolic about how XPoint allows permanent, fast, non-block oriented storage addressable directly and that this will change how we do computing.

Wait a second. We didn’t invent blocks on disks only because disks had to store things in blocks. We needed to block things up to control fragmentation. Pages of virtual memory are handled as fixed size blocks for the same reason – to control fragmentation. And if you have a file system or database type problem, then you will still need BLOCK access to the storage, whether or not the underlying technology is Heap Like. Just because XPoint can be a heap, doesn’t mean that we will ever really see it used as a heap. Large, long-lived, structure-less, anonymous heaps have pretty limited usefulness outside of a single process or process group inside a single machine. In any serious application, we are going to see one of the above smart abstractions on top of XPoint.

I think we are going to see XPoint caching file system and database indexes, and caching data to and fro among Petabyte databases and file systems.

I don’t see the processors executing directly from XPoint memory, except in the case of IoT if small XPoint devices are very very cheap. I don’t really see a lot of money in IoT compared with selling Multi- Terabyte XPoint Database / File System caches to folks that need those.

So while XPoint can be addressed like RAM with a single address, I don’t think any software is really going to see it this way.

If XPoint gets cheap enough, then we’ll replace all those Nand Flash SSDs with it because it’s non-volatile and doesn’t wear out. Some of us will plug them in using SATA even tho that’s hopelessly slow just because our machines have those ports. And others will use NVME or some other high speed port. But the XPoint will look like a block store because a file system will be abstracted onto it. DRAM is 10 times faster than XPoint and we are going to use the fast stuff for the instructions and heap.

That’s my view anyway.

:ww