Note: While the Banner for this post, and the images were created by AI, the text was created, for better or worse solely by me.

As you may have, I’ve been watching the progress of AI to generate images and even videos for a couple of years now. I’ve not been interested in “signing up” for one of the services since it is pretty clear that it will run into a lot of money to do anything serious. However, I have a couple of extra computers with GPUs so I thought it would be time to try to run AI image generation locally.

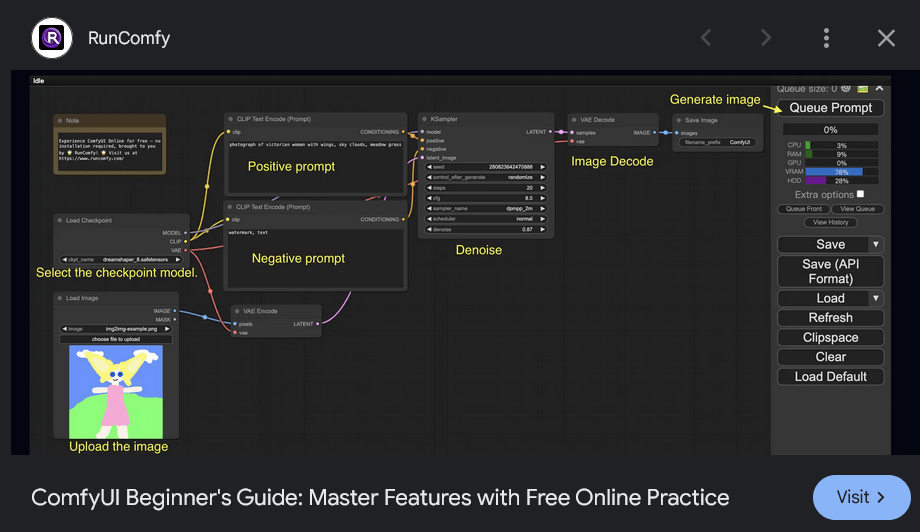

ComfyUI Seems Too Complex

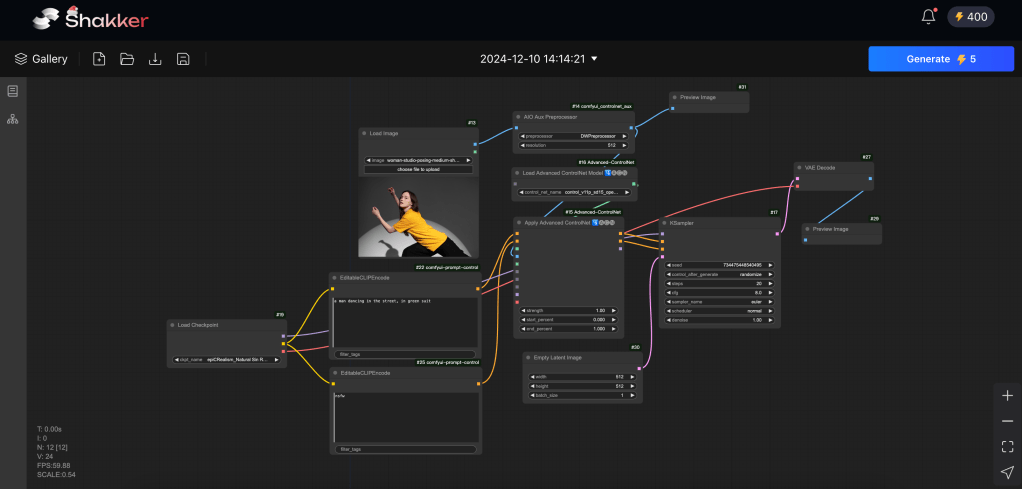

I took a look at ComfyUI. It looks way too complex. While I’m familiar with the concept of connecting the boxes – from texture creation in Blender among other places – I’d rather just check some boxes and paste in a prompt.

After some looking around I found Pinokio.

Continue reading “Run AI Locally”