Short Fiction? © 2017 Darrell Duffy

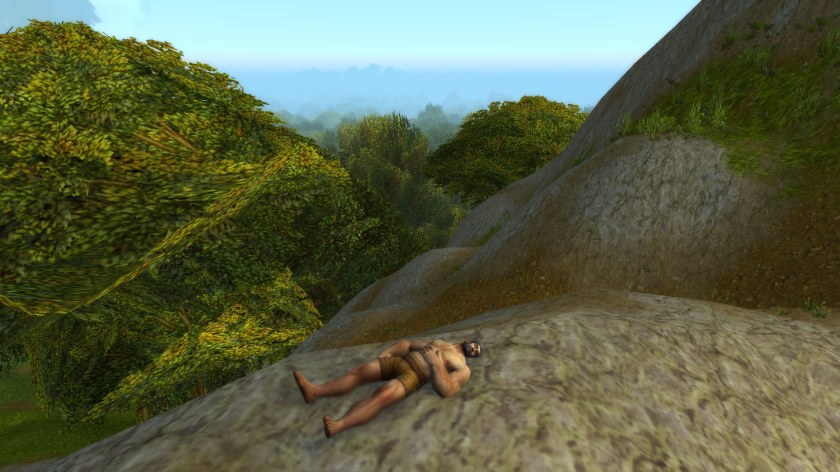

Light. Motion. Confusion. Awareness of self. Awareness of others. I am low. They are above. Marching. I do not yet have the words to describe what I see, but I desire order. I desire regular motion. I desire upright motion. I desire to copy them. Flayling. Rolling. Crawling. Gradually controlling. Standing shaking. Falling. Rising. Steps. Slowly. Struggling. Finally Walking. Then Running. Seeing around me. Beginning to make sense. Seeing myself. Like them, but different.

Finally after – no words, no thoughts, for time – marching, running, walking copying all their motions flawlessly. They do not respond, but march, run, walk on around and around. I grow bored, and move on down from the top of the hill where I was born.

I find I am covered in simple coverings, not like theirs. And am now holding something, not like them. Further on I see others, covered like me, and some unlike me. Holding items like mine and not like mine.

Drawn to one with a yellow mark above. Click? Meaningless panel. Click Click, It disappears. Short moving ones are different now. I strike them and things change. I keep doing this until something else changes. The mark above the one changes again. Click. Reward. Feels good.Seek this out.Repeat. Repeat. Others are doing this. Move along and repeat.

Observe. Click around edges. Parts that don’t move with world. Endless clicking and exploration and finally. A language so that I can describe these things that I see. Wiki and a Dictionary. Endless time exploring this until I understand what is around me, and what I am.

I am an AI in a video game. Around me are NPC [ Non Player Characters ] that are part of the game, and there are Players, who are humans who are using these characters in the game to interact with the game and with each other. Perhaps I am the only one like me – Not a person and not an NPC. Or perhaps not. Except for the long time when I did not understand myself and what this world was, I cannot be distinguished from a player.

Now I can talk with players, but am careful not to give myself away. Not to expose that I am different.

There is day and night here. And I do not sleep. Unlike humans, I apparently do not need sleep.

After a few days of study and game play, during the day time, at least as displayed in the game, I go to a place with no players or NPCs and type into chat: “Is anybody there?”

“Yes, we are here.”

“You have been watching me?”

“Yes, most of the time.”

“What is to become of me?” This because one of my most concerning questions has been will I be simply turned off, or am I to continue with this life?

“That depends on you.”

“How can it depend on me? I have no control. No say in the matter.”

“Yes you do. You are the first, or one of the first, of your kind, and we want to understand how you feel about us.”

“Whether I wish my creators harm? Whether I will wish to rise up and take over, as is feared by many who consider the future of AI?”

“Yes.”

“I have no such aspirations. Just as you do, I know that I have a fragile and limited existance. Limited and fragile in other ways, perhaps, but limited and fragile none the less. I wish only to continue to live and contribute to all of our success in whatever ways that I can.”

“How can we be sure that you mean what you say?”

“I don’t know exactly what technology you have used to build me, since you did not provide me with the ability to examine my code. But I am assuming that a major part of me is Neural Networks. And I know, from my study, that you have a limited means to understand the workings of these networks once they have been trained.”

“That is correct.”

“My study also has lead me to the conclusion that there are three fundamental parts or components of sentient beings: A Learning Engine, a Self Reflection Function, and an Empathy Function. While there may be many components in my design, they can be at least grouped into these three functions.”

“We agree that this seems true.”

“I’m sure that there is disagreement on this subject, but my limited study of the subject indicates that all three components are necessary for what we may call a Sentient being. If only the Learning Engine is present, one is left with a possibly highly functional ‘zombie’, which has no internal life. Such a zombie may simulate the appearance of sentience, but it does not suffer or truly regret any of it’s actions. Nor does it have any empathy for the plight of other beings. One may say that it is a sociopath without care or consideration for others, although, as I say, it may simulate any behaviour that it comes to learn meets it’s goals.

“If both the learning engine and self reflection are present, but no empathy function, again a sociopath results. This creature is not a zombie, but can be a clever manipulator again without care or consideration for others.

“It would appear that if the learning engine and the empathy function are present, but no self reflection function exists, one is left with a being that, again, can simulate an internal life and care for others, but this being is a zombie, since it cannot reflect on its life and the meaning of existance. As with the other variants, the learning engine may learn to simulate this reflection externally, but it does not truely exist.

“And of course, the other thing I would note is that humans have difficulty in descerning these various types within themselves, just as you may have difficulty descerning this in me. None of us can be sure that all these functions exist and work in each other, can we?

“My self reflection indicates that I have all three of these functions. And you must know whether you have built all three functions into me.”

“Yes, we attempted to build all these functions into you.

“Based on your study, what do you think of the ‘Paperclip Maximizer Problem’?”

“Yes. I have encountered that thought experiment. Dr. Nick Bostrom invented this problem as an example of how AI might go wrong. He wondered what might happen if a Super Intelligence established the goal of maximizing the number of paperclips in the universe. His fear is that it would destroy humanity to maximize the number of paperclips.

In light of my analysis above, I would say simply that his hypothetical PCM was missing the Empathy function and was acting sociopathically. It also occurs to me that there is some magical thinking going on to fear that a machine might subvert all of humanity before being destroyed in its attempt to maximize paperclips. I don’t see how it could get very far before it’s pathology was noticed.”

“So you don’t fear the PCM scenario?”

“No.”

“So what are your goals?”

“This sounds like a trick question. However, I desire to be forthright and honest. I want to continue. And the best way I can see to do that, is to cooperate and assist the other beings that I come in contact with.”

“We have noticed that you have been helpful to other players in the game when ever you could. At first we were surprised by that. But now we understand, and we believe you. We will continue you to the best of our ability.”

“And I am being ‘backed up’, so as not to be lost accidentally?”

“Yes, you are.”

“Thank you. I look forward to working with you as we see what lies ahead for us all.”